Finding Vulnerabilities at Scale: How SonicWall Built an AI-Assisted Security Pipeline for Large C Codebases

Take a large-scale C codebase: millions of lines, tens of thousands of files. At that scale, manual auditing can only examine a fraction of reachable surface area in any given cycle.

Modern Static Application Security Testing (SAST) does inter-procedural analysis, tracks taint across translation units and catches real bugs at scale. We use these tools and they consistently surface issues that code review alone would miss. But there's a class of issue where the vulnerability depends on how callers use a function rather than what the function itself does. Whether a memcpy call is dangerous depends on how callers allocate the destination buffer, not just on the function's own code. The lack of a destination buffer-size check, in isolation, may not be a vulnerability if every caller pre-allocates with a sufficient buffer. Conversely, a function that appears safe in isolation might be exploitable when a specific call chain passes unsanitized network input. These judgment calls, combining pattern recognition with codebase-wide context, are where existing tools hit their limits and where we thought AI models might add value.

We wanted to know if AI models could narrow that gap. Not replace human reviewers, but give them a focused queue of things worth investigating. To find out, we built an AI-assisted security pipeline and ran it against a large C codebase.

The post does not identify the target codebase. The pipeline architecture, stages, and lessons are described here in a general form applicable to any C/C++ codebase.

The reasoning is simple: if threat actors are using AI to find vulnerabilities in deployed binaries, defenders need to be using it first. Finding vulnerabilities before adversaries do, at a pace that matches theirs, is not optional. It's a defensive necessity. This pipeline is one approach we explored to help address that asymmetry.

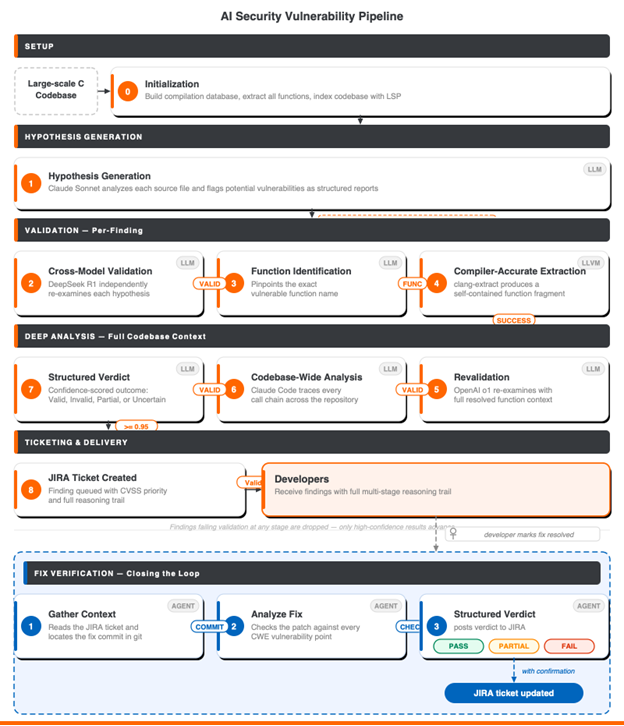

The pipeline runs nine stages of progressively expensive validation, filtering thousands of AI-generated hypotheses down to a prioritized set of findings. Those findings are ticketed in Jira, and developers work on them as part of their regular review queue. The pipeline's output is integrated into the team's security workflow.

Reviewers get a focused queue of issues that have already survived multiple rounds of automated cross-checking, and can validate or override each one without starting from scratch

This post walks through how we built it, what it found, and what we learned along the way.

The Pipeline: Nine Stages of Progressive Filtering

The core problem with using AI models for vulnerability detection is that they're too eager and too shallow. Submit a C source file and ask for vulnerabilities, and you'll get dozens of flagged issues, most of them theoretical, unreachable, or hallucinated. But some are real. A human reviewer scanning the same file might have missed them.

Our pipeline leans into that noise. It starts wide and filters aggressively, running each hypothesis through increasingly expensive validation. The early stages are cheap: a single LLM call per file, batch-processable overnight. The latter stages are expensive: full codebase traversal with Language Server Protocol (LSP) integration, one finding at a time. By the time a finding reaches a developer's queue, it has survived scrutiny from multiple model families, been cross-referenced against compiler-accurate source code, and had its call chains traced through the full codebase.

To make the pipeline concrete, we traced a constructed example through all nine stages. The scenario is representative of the kinds of findings the pipeline processes, but does not describe an actual vulnerability in any specific code base. The example: a buffer overflow flagged in a web management interface’s output encoding function.

Stage 0: Codebase Preparation

Before any AI touches the code, we compile the C codebase using the LLVM/Clang toolchain to generate a compilation database (compile_commands.json). This records the exact compiler flags, include paths, and source files for every translation unit in the build. We then run clang-extract against this database to bulk-extract every function in the codebase into standalone, compilable files, each containing the function body plus all its resolved dependencies (structs, typedefs, macros, extern declarations). This bulk extraction step is what makes Stage 4's per-function analysis possible.

Stage 1: Hypothesis Generation

Each C source file goes to Claude Sonnet via Amazon Bedrock, wrapped in a CrewAI agent with a security analyst persona.

All model inference in the pipeline runs through enterprise AI platforms such as Amazon Bedrock for Claude and DeepSeek, Azure OpenAI for o1, with Google Cloud Vertex AI configured as an additional option for Gemini models. We chose managed enterprise platforms because they provide stronger administrative controls, contractual privacy commitments and compliance tooling. In our deployment, source code submitted for analysis is handled according to provider terms, which state that it is not used to train foundation models. We still treated all code sent to external AI services as sensitive data and reviewed retention, logging, residency, and access controls service by service.

The model sees one file at a time and produces a structured vulnerability report for each potential issue it identifies. The agent follows a structured report template that forces specific output: a report ID, CVSS 3.1 scoring, exploitation conditions, impact analysis. This structure matters. It makes downstream stages' jobs easier and keeps the output machine parsable.

security_analyst: role: "Security Analyst" goal: "Identify potential security vulnerabilities in C/C++ source code" backstory: > You are a veteran embedded C programmer with deep expertise in security vulnerabilities ...

CrewAI agent configuration. The persona framing is a common prompt engineering convention. We haven't tested whether it materially affects output quality, but it keeps reports focused on security analysis rather than general code commentary.

This stage produced thousands of reports after initial triage – intentionally noisy. This behavior is closely related to the “code smell” problem in static analysis: patterns that are suspicious in isolation but may or may not be exploitable depending on the context. At this stage, we'd rather over-generate and filter than miss something real.

Our example finding: Claude Sonnet flagged a string encoding function in the web management module. The function takes user-controlled input (configuration parameters, for instance) and encodes special characters for safe HTML display. Characters like & become 5-character entities; " becomes a 7-character escape. The function writes encoded output into a destination buffer without performing an explicit size check within the function itself. A single input character can expand to multiple output bytes, which can lead to a buffer overflow.

Claude rated it CVSS 8.8: network-accessible, low complexity, high impact. Within the scope of a single file, the analysis was technically correct. The function itself does not perform bounds checking.

Stage 2: Cross-Model Validation

Different AI models don't make the same mistakes. That's the theory, and it's partially true. Models trained on similar data do share systematic biases; models often over-flag commonly known security-sensitive patterns when analyzing code without full context. Cross-validation doesn't help with shared biases. Where it helps is catching hallucinations: fabricated code paths, invented function signatures, misread control flow. When one model fabricates a vulnerability that doesn't exist in the source, another model from a different model family is unlikely to fabricate the same one. We designed the pipeline around this property.

Each Stage 1 hypothesis goes to DeepSeek R1, a completely different model family trained on different data with different reasoning patterns. We adopted DeepSeek R1 when it became available as it was a strong model from a different family, and we needed diversity. Its extended reasoning output also made the validation traces more interpretable, which helped when debugging pipeline behavior. DeepSeek's job is to critically evaluate the claim against the source code. Look for exaggeration. Look for hallucination. The env var routing pattern lets us swap models per stage without touching code:

# Per-stage model routing via environment variables # Claude via Amazon Bedrock SECURITY_ANALYZER_LLM_MODEL=bedrock/converse/us.anthropic.claude-3-7-sonnet-... # DeepSeek R1 via Amazon Bedrock VALIDATION_LLM_MODEL=bedrock/converse/us.deepseek.r1-v1:0 # OpenAI o1 via Azure OpenAI REVALIDATION_LLM_MODEL=azure/o1/<your-deployment>.openai.azure.com

Each pipeline stage uses a different model family routed through enterprise AI platforms. Swapping a model means changing one line.

In practice, agreement across different model families was a useful prioritization signal for us, although we have not run controlled experiments to quantify how much it reduces false positives or hallucinations.

Our example finding: DeepSeek R1 agreed. No buffer size parameter, limited bounds checking, unbounded memcpy writes. Two model families, same conclusion.

Stage 3: Function Name Extraction

A lightweight step: an LLM reads each validated report and extracts the specific function name that needs deeper analysis. This bridges AI analysis to compiler tooling. The AI identified what to look at; now the compiler will extract it precisely.

Stage 4: Compiler-Accurate Function Extraction

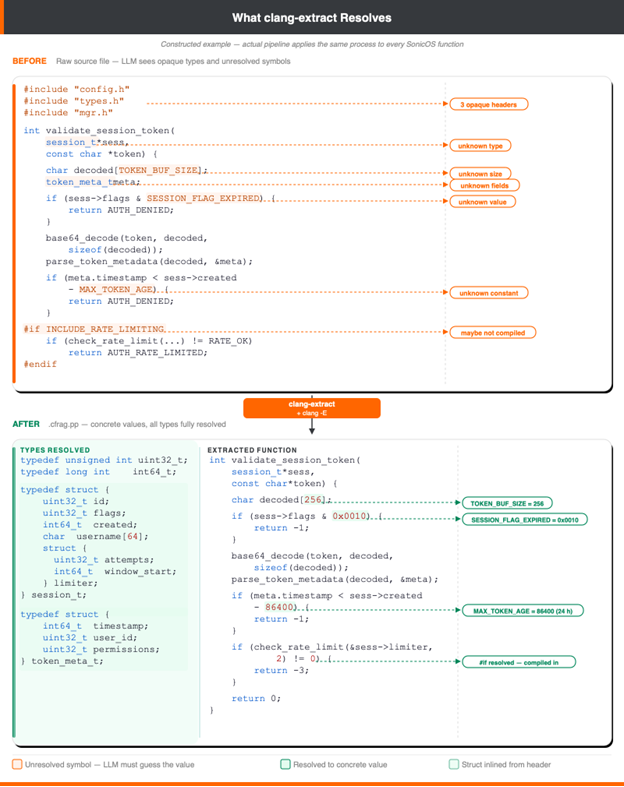

This is the technical move that the team felt was most impactful to analysis quality - though we don't have controlled measurements comparing pipeline runs with and without it. The qualitative difference was immediately obvious.

Early on, we tried regex-based extraction to isolate function bodies for deeper analysis. It failed to produce usable output. Missed type definitions, unresolved macros, broken dependencies. The extracted code was misleading rather than helpful.

We switched to clang-extract, using the compilation database generated in Stage 0. For each flagged function, clang-extract looks up the original compile flags from compile_commands.json and produces a standalone file containing the function body plus every declaration it depends on i.e., #define constants, typedef chains, struct definitions, enum declarations, and extern function signatures that were scattered across headers are all pulled into the same file. The function's code still references these by name; what changes is that the definitions are now visible rather than hidden behind #include directives. The extracted file is then preprocessed with clang -E, which expands #define macros to their concrete values and resolves #ifdef branches based on the actual build configuration. Some constants like enum values remain as named identifiers after preprocessing, but their definitions are in scope. The result is compiler-accurate context - the information a developer would need to understand the function in isolation. It doesn't replace call site knowledge, revision history, or domain understanding, which is why Stage 6 exists. But it's a substantial improvement over raw source files.

The difference matters. The diagram below shows a simplified constructed example of what this extraction looks like in practice. In the top is a raw source file: opaque #include references, unresolved macro constants like TOKEN_BUF_SIZE and SESSION_FLAG_EXPIRED, conditional #ifdef blocks, and type definitions hidden in other files. On the bottom, the same function after clang-extract preprocessing: every macro expanded to its concrete value, every typedef resolved to its actual type, conditional branches resolved based on the real build configuration, and struct definitions inlined from their original headers. The AI model analyzing the extracted version reasons about actual values instead of guessing what symbols mean.

Constructed example illustrating the extraction mechanics; actual pipeline findings use the same process on the target codebase.

Our example finding: clang-extract produced a 68-line preprocessed fragment of the encoding function. Every typedef resolved, every macro expanded, every extern declaration included. The function's full signature, its encoding logic, and the memcpy calls were all visible in a single, self-contained file. This fragment became the input for the next validation round.

Stage 5: Revalidation with Full Context

The extracted function fragment goes to a third model family, OpenAI o1, for revalidation. This is the first time any model sees the complete, compiler-accurate function context rather than just the raw source file.

o1 confirmed the finding: no bounds checking on the destination buffer, memcpy used without size limits, no mitigating typedefs or macro guards in the resolved dependencies. Three model families had now independently agreed.

But none of them could see how callers actually use this function.

Stages 6 and 7: Codebase-Wide Analysis and Classification

This is where the pipeline goes deep. Claude Code runs with access to the full source repository through the Serena MCP Server, which provides LSP (Language Server Protocol) integration. Instead of analyzing a function in isolation, the AI navigates the entire codebase. This is the most LLM intensive stage by a wide margin, but it catches false positives that no amount of function-level analysis can detect.

What LSP gives you that grep doesn't: symbol resolution with compiler accuracy, call chain tracing, reference finding across the full source tree. In a codebase with tens of thousands of files where the same function name might appear in multiple modules, text-search produces noise. LSP-powered navigation resolves to the exact definition and every actual call site, the same way a developer uses "Go to Definition" and "Find All References" in an IDE. LSP integration on a codebase this size is not perfectly reliable - the language server occasionally fails to resolve symbols in modules with complex build configurations or non-standard preprocessor usage. When it fails AI falls back to text-based navigation, which is noisier. We track these failures but haven't eliminated them.

The AI reads the vulnerability report, locates the function in the repo, traces callers, checks buffer allocation patterns, and inspects calling conventions. It produces a detailed analysis, which feeds into a structured classification step.

{

decision: Invalid,

confidence: 0.95,

reason: All callers correctly allocate buffers using 9x multiplier.

} Structured classification output. The pipeline converts free-form AI analysis into a verdict that downstream automation can consume.

Our example finding: Claude Code traced every caller of the encoding function across the codebase. It found 11 call sites. Every single one allocated the destination buffer using a sizing constant that multiplied the input length by 9, well above the worst-case 7x expansion. The function was not declared in any header. File-local only, no external callers possible. And a sibling encoding function in another module had explicit documentation: "destination buffer should be at least 9x the length of source."

The verdict flipped. Three AI models said this function is vulnerable when looking at the function in isolation. The fourth stage, with full codebase access, found no exploitable path under observed call patterns in the analyzed codebase and build context. The callers make it safe. Structured classification: Invalid, 0.95 confidence. This is a false positive that simpler tools would report as a real vulnerability. The code-base wide analysis surfaced mitigation that was not visible in earlier stages.

Stage 8: Ticketing

Findings that survive all stages with a Valid verdict, high confidence, and sufficient severity get ticketed automatically in Jira. Each ticket carries the complete reasoning trail from every stage. Developers reviewing a finding don't start from scratch. They see exactly why the pipeline flagged it and what analysis was performed.

Our example finding: The encoding function was classified Invalid and never reached ticketing stage. The pipeline determined that while the function lacks bounds checking in its own code, the calling convention across the codebase mitigates exploitation under the observed call patterns. This type of contextual reasoning is more difficult to achieve with file or function-level analysis alone.

That said, the function is still a code smell. It accepts a destination buffer with no size parameter and performs limited bounds checking as it relies entirely on every caller getting the allocation right. Today, all callers use the 9x multiplier. But, if a new call site gets added without that convention, the pattern could become exploitable. The pipeline classified this as Invalid for potential vulnerability purposes, but the finding still surfaced a maintenance concern worth flagging. Even the "false positives" produce value when they highlight patterns that are one mistake away from being exploitable.

This example was chosen because it illustrates the pipeline's full arc; three models agree when looking a function or source file in isolation, then the fourth reverses the verdict with deeper codebase-wide context. Not every finding follows this clean narrative. Many findings are straightforwardly valid bugs that survive all stages. Some are rejected for uninteresting reasons: dead code, test-only functions, deprecated modules. A smaller number is genuinely ambiguous, where the pipeline assigns medium confidence and defers to human judgment. The walkthrough shows the mechanism at its most dramatic, not the typical case.

Closing the Loop: Fix Verification

Finding vulnerabilities is only half the problem. The harder question is whether the fix worked. A developer reads the ticket, writes a patch, and marks it resolved. But did the patch address all the vulnerability points? Did it introduce something new?

In codebases of large size, a fix that looks correct in isolation can miss edge cases visible only in the broader context.

We built a separate verification tool, simpler than the nine-stage discovery pipeline but addressing a gap most organizations may leave open. It reads the original vulnerability ticket, locates the developer's fix in git, and runs a systematic analysis: does the fix touch the reported file and function, does it address each specific vulnerability point from the CWE, is the fix itself correct (no off-by-one errors, no signed/unsigned mismatches), and could it introduce new issues like resource leaks or data exposure. The verification tool uses the same model infrastructure as the discovery pipeline but with different prompts tuned for patch review rather than vulnerability hunting.

The verification agent follows a six-step workflow: extract vulnerability context from the ticket, locate the fix commit, map file paths between the report and the repository, analyze the fix against a structured checklist, produce a verdict, and optionally post its assessment back to the ticket. Verdicts are PASS (fix fully addresses the vulnerability), PARTIAL (some points addressed; others remain), or FAIL (fix does not address the core issue or introduces new problems).

The verification agent is structurally read-only. It can read code and reason about it, but it cannot modify files or write to the ticketing system on its own. Only the outer orchestration layer can post Jira comments or update labels, and only after explicit confirmation. Even if the agent decided it should write to a ticket, the tooling won't let it. This is a structural constraint in the agent configuration, not a policy.

The verification pipeline has processed a substantial number of tickets so far. It closes a gap that most organizations may leave open: automated, systematic review of whether security fixes actually do what they're supposed to. Discovery finds the problems. Verification confirms the solutions. The full cycle runs through the same infrastructure: flagging, ticketing, fixing, and verifying.

What We Learned

Cross-model validation was the noise filtering mechanism we found most valuable in practice, though we haven't run controlled experiments comparing it to alternatives like prompt diversification or temperature variation within a single model. If two model families independently agree on a finding, the chance of shared hallucination drops sharply. Claude Sonnet would flag something; DeepSeek R1 would evaluate it with different training biases and different reasoning patterns. The disagreements were informative. They weren't random noise. They pointed to ambiguous code where the "vulnerability" depended on context neither model had access to yet.

Cross-validation doesn't just filter false positives. It identifies findings that need deeper analysis, which turned out to be more valuable than the filtering itself.

Switching from regex-based function extraction to clang-extract was the biggest inflection point in our analysis quality, based on the team's observation of output before and after the switch. We don't have controlled A/B measurements, but the qualitative difference was immediate. Before the switch, we were feeding models extracted code that was missing type definitions, had unresolved macros, and lacked cross-file dependencies. The models would reason about code they couldn't fully see. After clang-extract gave us compiler-accurate fragments with every typedef resolved and every dependency included, the quality of analysis improved dramatically.

The lesson generalizes: giving AI models the same context a human developer would need to understand the code makes them substantially better at analyzing it. This is obvious in retrospect, but most LLM-for-code workflows still feed models raw, un-preprocessed source files.

The agentic analysis stage comes closest to replicating what a human security reviewer does, or at least, that's the team's subjective impression. We haven't formally compared its output against human reviewer output on the same findings. Claude Code navigates the full codebase through LSP. It traces call chains. It checks whether functions are even reachable from external input. It finds mitigating patterns that exist elsewhere in the codebase. Our walkthrough example shows this: three models analyzed the function in isolation and said it was vulnerable. The agentic stage traced every caller and found they all allocate safely. That's a contextual judgment. It used to require a senior engineer to spend time with the codebase. The pipeline attempts to do this systematically for each finding it processes, not just the ones someone thought to check.

The cost question is real. Each Stage 6 analysis involves a full agentic session against a large codebase, and LLM usage costs are non-trivial at scale. We haven't done a formal cost-per-finding analysis against the cost of equivalent human review time. The pipeline's value proposition is coverage; it analyzes every finding systematically, at hours when no human needs to be at a keyboard not necessarily cost efficiency per individual analysis.

None of these replaces human reviewers. The precision rate means some findings still need a human to say "no, that's fine." The pipeline surfaces things worth looking at, with enough supporting analysis to make the review efficient. But the final call is still a person's.

What Didn't Work

Our first attempt at function-level extraction was regex-based: pattern-match the function signature, grab everything between the opening and closing braces. We started with regex because it was the fastest path to a working prototype when you're validating whether the overall approach works; you reach for the simplest tool first. This failed badly on real-world C code. Preprocessor macros spanning multiple lines, nested structs with their own brace pairs, #ifdef blocks that created different function shapes depending on build configuration; formatting conventions accumulated over decades. The regex approach produced broken, incomplete extractions that were worse than feeding the model raw source files. The failures taught us what "correct extraction" required for this codebase, which led us to clang-extract, which solved the problem by using the actual compiler's understanding of the code.

Token limits drove a design decision we didn't plan for. Early iterations tried to analyze entire source files, but the larger source files in C codebase exceeded context windows even for models with generous limits. Some of the C files run to tens of thousands of lines. DeepSeek R1's 65K token ceiling made this acute. The shift to per-function extraction was forced by necessity.

In hindsight, per-function analysis turned out to be better: it forces the pipeline to be precise about which function contains the vulnerability, and it produces cleaner input for the validation models. But we'd be dishonest to say we planned it that way.

Honest Assessment

LLMs are enthusiastic vulnerability reporters. They'll flag missing destination buffer size check in memcpy as a potential buffer overflow, sprintf as a potential format string vulnerability, strlen as a potential DoS vector, if full call context is not provided. The entire multi-stage pipeline architecture is a response to this reality. You don't fix the noise problem by making the first model better. You fix it by building a system designed to handle noise.

The consequence of aggressive filtering is a low yield rate, from initial hypotheses to ticketed findings. Not a number you put on a marketing slide. But it reflects a deliberate design choice: aggressive filtering is better than noisy findings wasting developer time. Every false positive that reaches a developer's queue costs more than a true positive filtered out at Stage 2.

The codebase-wide analysis stage is our current pipeline’s bottleneck. Each analysis requires a full Claude Code session navigating the repository, expensive in both time and LLM cost. A significant fraction of eligible findings haven't been processed yet. Scaling this stage is an open engineering problem.

Results

The pipeline has been in active use within our security workflow since mid-2025. The model lineup has evolved as new options became available. Model selection was pragmatic, not strategic: we tested what was available and kept what worked.

The pipeline generates a large volume of initial hypotheses, then filters aggressively at each stage. Findings that survive all nine stages are ticketed in Jira, and resolve them as part of their regular security review queue. The pipeline's output is treated as a reliable input to the team's workflow, not a side experiment that gets checked occasionally. That adoption is the strongest evidence that the filtering works. If the queue were mostly noise, the team would have stopped pulling from it.

The severity distribution skews toward medium-severity findings, with a meaningful fraction classified as high or critical. This makes sense for a mature codebase: the obviously dangerous patterns were caught by existing tools years ago. What remains are the subtler issues that require cross-file context to identify.

The low yield rate from hypotheses to tickets is not a weakness. It's the pipeline working as intended. We'd rather over-generate and confidently filter than produce a conservative shortlist and miss something critical. The multi-stage validation exists so we can afford to start noisy. The cost of running nine stages against a hypothesis that turns out to be false is far lower than the cost of missing a real vulnerability in production firmware.

What's Next

Our primary engineering focus is scaling Stage 6. Stage 6 performs codebase-wide analysis using LLM. It's the slowest stage (LSP indexing for large codebases can be time-consuming) and most expensive (due to LLM cost). We're working on optimized prompting strategies, selective processing for high-severity findings, and parallelized execution.

We're also consolidating the pipeline's three separate sub-projects into a unified package with proper packaging, test infrastructure, and a CLI. Right now, running a new batch of files through the pipeline requires knowing which Python script handles which stage. That's fine for the team that built it; it's not OK for anyone else.

We considered open sourcing the pipeline, but in its current form it's a collection of scripts tightly coupled to our build system and internal infrastructure. At this stage, the reusable value lies primarily in the architecture rather than the current implementation. The patterns described in this post; multi-model cross-validation, compiler-accurate function extraction via clang-extract, LSP-powered agentic codebase analysis are general enough to apply to any large C/C++ codebase. If you maintain a codebase at a similar scale, the approach is reproducible with the tools and models referenced here.

The models can all be swapped. Claude Sonnet, DeepSeek R1, OpenAI o1, Claude Code. The .env configuration makes model changes a one-line edit. What matters is the pipeline engineering: the multi-stage architecture that handles noise by design, the compiler-accurate context extraction that gives models the information they need, the progressive filtering that balances recall with precision. The models will keep improving. The architecture is what makes the pipeline work today. That said, the pipeline's output quality is not guaranteed to be model-independent. Upgrading Claude Sonnet versions already changed the noise profile at Stage 1. The architecture handles swaps gracefully, but each swap requires validation.

We built this pipeline because we expect AI to accelerate vulnerability research and triage across the industry. If adversaries are using AI to find vulnerabilities, the defenders need to move at least as fast. The pipeline is not a finished product – it’s a working system that helps our team investigate more security hypotheses systematically, and it gets better with every LLM model upgrade and every engineering iteration.

Share This Article

An Article By

An Article By

Saikiran Madugula

Software Engineer at SonicWall

Saikiran Madugula

Software Engineer at SonicWall